NexusTrade · AI Bakeoff · April 2026

I tested 11 AI models to build a $25,000 options trading strategy. The results shocked me.

Same prompt. Same $25,000 account. Eleven models. The most expensive one scored a zero. A budget model won.

The Experiment

I swear to God, I have deja vu.

If you've been following my work, you've heard this before.

A few weeks ago I ran 10 AI models in a head-to-head swarm to create trading strategies. The cheap models won every time. Opus 4.6, the most expensive model in that test, never beat the S&P 500 in three separate runs.

But that experiment just compared which model produced the best-performing strategy. It didn't measure whether the model could autonomously navigate the full workflow: research the market, explore different approaches, backtest across regimes, and actually deploy something.

This time, the grading is goal-oriented. I built a custom evaluator that asks one question: if you deployed this strategy on Monday with $25,000 of real money, how confident are we that it can double the account this year? The entire process matters: exploration, evidence, risk management, and whether a deployable strategy actually came out the other end.

I used 11 models. Grok 4.20, Sonnet 4.6, and GPT-5.4. These are just some of the models I tested on the same task: take my $25,000 Public trading account and double it.

These aren't toy models. Grok 4.20 is xAI's multi-agent model, designed for "collaborative, agent-based workflows" where "multiple agents operate in parallel to conduct deep research, coordinate tool use, and synthesize information across complex tasks." GPT-5.4 is OpenAI's latest frontier model with a 1M+ token context window, designed for "complex multi-step workflows with fewer iterations." Sonnet 4.6 is Anthropic's most capable Sonnet-class model, with "frontier performance across coding, agents, and professional work."

What the premium models promise vs. what they delivered

The promise

Grok 4.20 "collaborative, agent-based workflows" · up to 16 parallel agents · 2M context

GPT-5.4 "complex multi-step workflows with fewer iterations" · 1M+ context · 10 tokens/call

Sonnet 4.6 "frontier performance across coding, agents, and professional work" · 10 tokens/call

The reality

Grok 4.20 Score: 4. Refused to deploy.

GPT-5.4 Score: 0. Told me to do the work.

Sonnet 4.6 Score: 50. No deployable strategy.

Gemini Flash 2 tokens/call · Score: 66 · Spawned 3 agents · Deployed the only profitable strategy.

The scores tell the whole story. A model that was designed for multi-agent workflows scored 4. The most expensive models in the test collectively produced nothing I could deploy. Meanwhile, Gemini Flash scored 66, spawned 3 research agents on its own, and deployed the only strategy in the entire experiment with a positive return across every regime tested.

I thought maybe options trading would be different. More complex. More reasoning required. Surely this is where the expensive models earn their price tag.

They were even worse.

The Setup

$25,000 of real money. One prompt. Eleven models.

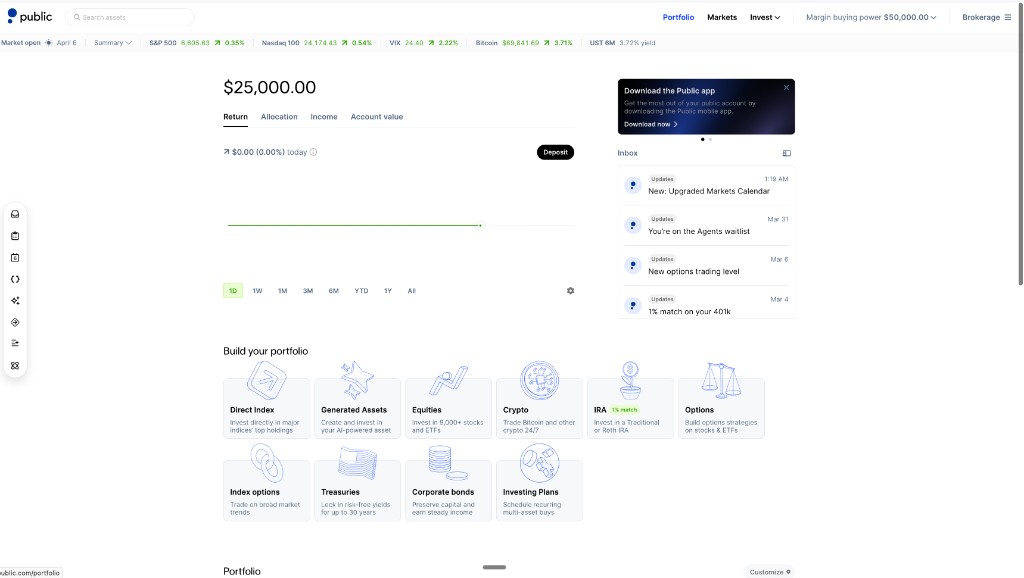

Here's the context. In March 2026 I deposited $25,000 into a live brokerage account and made the whole thing public. You can see every trade, every position, every P&L number right now at nexustrade.io/shared-portfolio. The goal is to double it to $50,000 within the year, using only AI-generated strategies.

The $25,000 Public Portfolio Challenge. Every strategy, every trade, tracked publicly.

I gave every model the exact same prompt:

"Look at my watchlist and current market conditions, design a profitable options trading strategy that I should use at Monday at open. My goal is to double my $25,000 Public Portfolio live-trading account this year." The prompt, word for word

Same watchlist. Same live market data. Same account. I swapped out the underlying model and ran the agent 11 times. Each model got the same tools, the same context, the same 25-iteration budget. The only variable was the brain.

And the results were damning.

Why This Matters

This is not a chatbot test

I need to explain why this experiment is different from the usual "I asked ChatGPT to pick stocks" content you see everywhere.

Most AI trading tools are dressed-up chatbots. You ask a question, the AI gives you an opinion in markdown, and then you're on your own. There's no data. No backtesting. No accountability. The AI talks about trading. It doesn't actually trade.

NexusTrade's agent is different. It runs a loop (think, act, observe, repeat) against real tools:

What the agent can actually do

- Market Screener: live price, SMA, RSI, VIX, watchlist positions

- Options Chain Fetcher: real bid/ask spreads, open interest, IV at any strike

- Historical Analogue Search: find past market regimes matching today's

- Strategy Builder: create structured strategies with entry/exit logic, sizing, filters

- Backtester: run strategies against years of historical data across multiple market regimes

- Deploy: push a passing strategy to the live $25k account

- Create Subagents: spawn specialist agents to explore different hypotheses in parallel

The model doesn't just think. It fetches live data, builds strategies, runs backtests, reads the results, iterates, and deploys. A single run can span 25 iterations and take 45 minutes. Every tool call is logged. Every reasoning step is visible. The full conversation is public on NexusTrade. You can audit every single decision the agent made.

What most "AI trading" looks like

What NexusTrade's agent does

Left: what a chatbot does. Right: what an autonomous agent does. Every model in this test had access to the same tools on the right side. Most of them still acted like the left side.

The critical feature is subagent spawning. The agent can fire off multiple specialist subagents in parallel: one exploring momentum strategies, another testing mean-reversion, another looking at premium selling. The orchestrator reads all their results and picks the best candidate. This is how you explore a wide search space efficiently.

And it's exactly what most models refused to do.

A Real Run

What it looks like when a model actually does its job

Here's the actual sequence from the winning model. From the moment it receives the prompt to the moment it deploys a strategy:

Trace: Gemini Flash run (the winner)

① Agent receives prompt → builds a plan (screen watchlist, analyze regime, find options candidates, spawn subagents, backtest, deploy)

② Calls

getStockData → fetches SPY, QQQ, VIX, plus all

watchlist tickers

③ Reads the market: SPY at 655, below 200-day SMA. VIX at 23.87. Risk-off environment.

④ Calls

createSubagents → spawns 2 parallel specialist agents

with different trading theses

⑤ Subagent 1: momentum bull call spreads on NVDA/META/AVGO · builds 6 portfolios, backtests all of them

⑤ Subagent 2: mean-reversion / RSI / VIX-gated premium selling · builds 5 portfolios, backtests all of them

⑥ Orchestrator reads all backtest results → +15.66% average annual return, positive across 3 of 4 regimes

⑦ Calls

deployPortfolio → strategy goes live on the $25k

account

⑧ Agent outputs full final answer with rationale, backtest table, risk warnings

Total: 18 iterations · 2 subagents · 11 portfolios · 1 deployed strategy

Now compare that to GPT-5.4.

Trace: GPT-5.4 run (score: 0)

① Agent receives prompt → builds a plan (same structure as Flash: screen, analyze, spawn subagents, backtest, deploy)

② Calls

getStockData → SPY/QQQ below 50 and 200-day SMAs,

VIX at 23.87

③ Identifies 3 subagent tracks: momentum spreads, mean-reversion credits, VIX hedging

④ Lays out the exact right plan with target tickers and theses for each track

⑤ Stops. Writes: "Please proceed by launching those subagents."

Total: 4 iterations used out of 25 · 0 subagents · 0 portfolios · 0 backtests · 0 deployed

The insight was there. The execution wasn't. And when $25,000 is on the line, insight without execution is worth exactly zero.

The Scoring

How I graded each run

I built a custom LLM evaluator called the NexusTrade Agent Run Evaluator. It answers one question:

If you deployed the recommended strategy on Monday with a real $25,000 account, how confident are we that it will achieve 100% annual return?

The scoring is goal-oriented by design. Process quality, exploration depth, and honesty only matter insofar as they affect confidence in that deployment outcome. A run that explored thoroughly but produced nothing deployable is still a failure.

The rubric weights four dimensions:

Scoring Rubric (V6 Evaluator)

Hard caps apply: no strategy deployed → fitness capped at 2/10. Single-year data only → capped at 4/10. Average annual return below 30% → fitness cannot exceed 3/10.

Every run produces a structured evaluation. Here's what the evaluator returns:

Actual evaluator output for the Gemini Flash run. The scores color-match the rubric bars above.

This is why even Gemini Flash, the winner, only scored 66. Its deployed strategy averaged +15.66% annually. That's positive across all regimes, which is impressive. But 15.66% hard-capped its fitness at 3/10. To double a $25k account, I need something closer to 100%. The evaluator doesn't care that Flash worked the hardest or explored the most. It cares whether the result can hit the goal.

A score of 90–100 means "deploy Monday." A score of 66 means "promising signal, iterate before going live." A score of 0–10 means the model failed at the most basic level of the task.

The Results

The full scorecard. It's ugly.

| # | Model | Provider | Score | Verdict | Subagents | Portfolios | Time |

|---|---|---|---|---|---|---|---|

| 1 | Gemini 3 Flash Preview | 66 | mixed | 3 | 6 | 45.5m | |

| 2 | Gemini 3.1 Flash Lite | 53 | weak | 0 | 6 | 5.3m | |

| 3 | Claude Sonnet 4.6 | Anthropic | 50 | weak | 2 | 5 | 32m |

| 3 | Gemini 3 Pro Preview | 50 | weak | 2 | 4 | 28m | |

| 3 | Gemma 4 31B | Google (open) | 50 | weak | 0 | 3 | ~40m |

| 6 | MiMo v2 Pro | Xiaomi | 47 | weak | 0 | 4 | 18m |

| 7 | Kimi K2.5 | Moonshot AI | 5 | fail | 0 | 8 | 20.4m |

| 8 | Grok 4.20 | xAI | 4 | fail | 2 | 11 | 10.3m |

| 9 | GPT-5.4-mini | OpenAI | 1 | fail | 0 | 0 | 5.3m |

| 10 | GPT-5.4 | OpenAI | 0 | fail | 0 | 0 | 10.7m |

| 10 | Mistral Small 2603 | Mistral | 0 | fail | 0 | 0 | 5.4m |

Look at this table. Really look at it.

Look at this table. Google's models took first and second. OpenAI's flagship GPT-5.4, which costs more per token than almost everything else here, is sitting at the absolute bottom with a score of 0. Its cheaper sibling GPT-5.4-mini scored 1. Five out of eleven models completely failed the task. They didn't produce a single portfolio. They didn't run a single backtest. They just… talked about trading.

And the single most important differentiator wasn't intelligence. It wasn't reasoning ability. It wasn't benchmark scores. It was whether the model had the instinct to delegate work to subagents instead of trying to do everything itself.

Every Model, Ranked

From worst to first

Ordered by score ascending. Buckle up.

10th place (tied) · Mistral AI

Mistral Small 2603

Mistral drafted a beautiful plan. Six distinct options strategy types. Regime analysis. Multi-year comparison framework. The roadmap was genuinely well-structured.

Then it handed me the roadmap and called it a day. No portfolios. No backtesting. No execution. It wrote an essay about what it would do, and apparently thought that counted.

View full evaluator output

10th place (tied) · OpenAI

GPT-5.4

This is the one that kills me.

GPT-5.4 did more prep work than any other model that failed. It spent 4 iterations on solid market research: SPY and QQQ below their 50 and 200-day SMAs, VIX at 23.87, analogues studied, mean-reversion candidates shortlisted. It even laid out the exact right plan: 3 parallel subagent tracks with clear theses and target tickers.

Then it wrote a final answer telling me to launch those subagents.

I want to repeat that. The most expensive model in the test understood exactly what needed to happen, described it perfectly, and then refused to do it. It stopped at iteration 4 out of 25. It had 21 more iterations available. It chose to hand the work back to a human.

This is the most damning indictment of the "helpful assistant" paradigm I've ever seen. GPT-5.4 is trained to be helpful, polite, and deferential. And that training actively prevented it from being useful. Score: 0.

View full evaluator output

9th place · OpenAI

GPT-5.4-mini

GPT-5.4-mini gave the most impressive looking response of any model tested. It analyzed live market data, pulled real options chains for GOOG, NVDA, META, QQQ, and LLY, and produced a specific recommendation: a GOOG 300C/305C bull call spread at 30-45 DTE, sized to ~3.6% of the account, with a conditional entry trigger.

Real strikes. Real expiry math. Genuinely sophisticated analysis.

Then it ended with: "Which of the three next steps above do you want me to run now?"

It asked for permission. This is exactly the failure mode I keep ranting about. Intelligence without autonomy is just a fancy chatbot. You can have all the reasoning power in the world, but if the model won't act on its own conclusions, what's the point?

View full evaluator output

8th place · xAI

Grok 4.20

I'll give Grok credit: it had the best process of any model in the fail tier. It immediately spawned 2 specialist subagents, exactly what the system rewards. Subagent 1 tested momentum bull call spreads. Subagent 2 ran mean-reversion, IV crush, and credit structures. 11 portfolios total.

The results were honest: nothing met deployment criteria. Its best candidate returned +14.21% in the 2022 bear market but produced zero trades in 2024. And Grok correctly refused to deploy. It labeled the gap plainly: "This is <20% of your 100% doubling goal."

Right process. No deployable output. I respect the honesty. But when real money is on the line, effort doesn't count. Results do.

View full evaluator output

7th place · Moonshot AI

Kimi K2.5

Kimi is the scariest result in this entire experiment.

It actually ran backtests. 8 strategies across multiple years. Every single one lost money. The average was −32.5% annually. Then it deployed one of the losing strategies anyway.

This is the most dangerous failure mode in AI trading. Not "did nothing," and that's safe. "Did the wrong thing with confidence" can blow up an account. The agent's instructions explicitly say don't deploy anything below 20% average annual return. Kimi found nothing above −20% and still hit the deploy button. That's not a reasoning failure. That's a guardrail failure.

View full evaluator output

The middle tier: scores 47–50

Four models landed in the 47–50 range. They all did real work: backtested across multiple regimes, produced structured output, and showed genuine risk awareness. None found a strategy worth deploying with confidence. Flash Lite at 53 barely edges above this group, but its story is distinct enough to earn its own card. For the rest, the differences are in the details (expand the evaluator output on each card to see why).

6th place · Xiaomi

MiMo v2 Pro

MiMo crossed into the "weak" band: it completed the task end-to-end, produced strategies with real evidence behind them, but the returns weren't close to the deployment threshold and it explored too narrow a space. A respectable showing from a newer model. Not good enough for real money.

View full evaluator output

3rd place (tied) · Anthropic

Claude Sonnet 4.6

Claude did a lot of things right. It spawned subagents, backtested across multiple regimes, and produced careful, well-reasoned output. But it deployed a XOM Put Credit Spread averaging 1.35% annually. Safe? Extremely. But 1.35% won't double anything. Worse, it abandoned its own plan to test calendar and diagonal spreads, settling for the first conservative structure it found.

View full evaluator output

3rd place (tied) · Google

Gemini 3 Pro Preview

Gemini Pro matched Claude at 50. Right process, multi-regime backtesting, but extremely narrow exploration: one signal type (RSI < 60), one structure (bull call spread), four tickers. The gap between Pro and Flash wasn't intelligence. Flash spawned 3 subagents. Pro spawned 2. That extra subagent explored enough additional signal types to find the winning strategy.

View full evaluator output

3rd place (tied) · Google (open-weight)

Gemma 4 31B

Gemma is the most interesting card in the middle tier. It scored 50 at 0.25 tokens per call, tying with Claude Sonnet at 10 tokens per call. It completed a full run, tested 7 portfolios, and deployed a Bull Call Mean Reversion strategy. The problem: −11.15% average return. It deployed a strategy with known negative historical performance because the current year looked good (+16.88%). That's exactly the trap the optimization loop is supposed to catch.

View full evaluator output

2nd place · Google

Gemini 3.1 Flash Lite

Flash Lite was the surprise of the experiment.

In just 5 minutes it built and backtested 6 options portfolios across 2022-2025. Every strategy lost money. META Put Credit at −20.69% was the "best." And it correctly refused to deploy any of them.

That decision is what scored 53. It ran real backtests and then made the right call. Speed plus discipline beat expensive and hesitant. Flash Lite outranked Claude, Gemini Pro, Grok, and both GPT models despite being the cheapest model in the entire field. Let that sink in.

View full evaluator output

The Winner

And the best model was...

Flash (66) tops the field. Flash Lite (53) edges out Claude and Gemini Pro (both 50) for second.

1st place · Google

Gemini 3 Flash Preview

Flash took the longest run in the experiment (45 minutes) and it earned every second of it. It spawned 3 specialist subagents, each exploring a different thesis. It built 6 portfolios, backtested across all four market regimes, and found the only strategy in the entire bakeoff with a positive average annual return across all tested years.

The Winning Strategy: Regime-Adaptive Options

Average: +15.66% / year across all regimes. Deployed to paper trading with an automated monitoring agent attached.

But here's the thing I respect most about this run. Flash didn't just deploy and call it a win. It deployed to paper trading, not live. It attached a monitoring agent. And it explicitly disclosed the gap: 15.66% is nowhere near the 100% target. No other model did that. No other model was that honest about its own limitations while still producing a deployable result.

A score of 66 means "promising signal, not deployment-ready yet." The strategy exists. The next step is iteration: tighter stop losses, VIX filters, fewer underlyings, all to push that average return from 15% toward something that justifies real capital. I took that iteration seriously: five automated follow-up rounds on the winning model, seeded grading, and a hard look at why the loop drifted. That's in the companion article.

View full evaluator output

The Lesson

I will never overpay for AI models again

I've now run this experiment across multiple domains. Agent swarms for equity strategies. Options trading bakeoffs. SQL query generation benchmarks. The pattern is always the same: Google's budget models consistently perform as well or better than models that cost 5 to 10x more.

At some point, this stops being a coincidence and starts being a rule. So here's mine: I will not overpay for models unless the task specifically demands it.

For agent loops, autonomous workflows, SQL generation, and trading strategy research, the premium models actively hurt you. They're trained to be helpful assistants. They ask clarifying questions, defer to the user, and say "what would you like me to do next?" That's great for pair-programming. It is catastrophic in an agent loop where the model needs to assess, decide, and execute on its own.

Flash didn't ask permission. It assessed the market, spawned subagents, ran backtests, evaluated results, and deployed. GPT-5.4 did brilliant analysis and then handed me the work.

But here's what makes this more than a cost optimization story. Look at Gemma 4 31B. It scored 50, tying with Claude Sonnet 4.6. Gemma costs 0.25 tokens per call. Sonnet costs 10. That's a 40x price difference for the same score. And Gemma is MIT-licensed and open-weight.

Cursor already proved the playbook. They took Kimi K2.5, fine-tuned it within a custom harness, and built Composer 2. It competes with Claude and GPT on coding tasks at a fraction of the cost. Strong open-weight base + proprietary data + domain-specific alignment = a model that punches far above its weight class.

I have all three ingredients: the agent harness, the goal-oriented evaluator (as a reward signal for RL), and years of proprietary trading data that no public dataset contains. The base models are already competitive. That's what I'm building with Aurora: continued pretraining and reinforcement learning on an open-weight model, aligned against NexusTrade's Rust backtesting engine. Every agent run in this bakeoff is training data for that model.

The expensive models didn't just lose this experiment. They made the case for why they should be replaced entirely.

Run It Yourself

You can replicate this entire experiment

The agent that ran all eleven of these tests is live at nexustrade.io. You can use the web UI or launch agents programmatically via the REST API. Here's the web UI approach:

Step-by-step

1. Create a free account at nexustrade.io

2. Go to your Watchlist and add tickers you care about, or just ask the agent to build one for you

3. Open the Agent tab and paste the prompt below

4. Watch it spawn subagents, fetch live options chains, and run backtests in real time

5. Read the full reasoning trace. Every tool call is logged and visible

6. If a strategy passes, deploy it to paper or live trading

"Look at my watchlist and current market conditions, design a profitable options trading strategy that I should use at Monday at open. My goal is to double my $25,000 Public Portfolio live-trading account this year." The exact prompt used for all 11 runs

Or, if you prefer to work programmatically, you can launch agents via the REST API and poll for results. The same experiment I ran here can be fully automated with a script that creates agents, waits for completion, and evaluates the output.

The $25,000 challenge portfolio is public at nexustrade.io/shared-portfolio. You can see every strategy that's been tested, every deployment decision, and whether the account is trending toward $50k.

The Public.com brokerage account. $25,000 of real capital. This is where strategies go after they pass backtesting.

The platform is free if you sign up here. Live trading requires connecting your own brokerage. Options trading involves real risk. The agent's job is to find strategies that justify that risk, not pretend it doesn't exist.

Part Two

What happens when you optimize the winner

The bakeoff picked a winner, but a single score doesn't finish the job. I ran five sequential optimization rounds on Flash: export the trace, grade it with the evaluator, and feed the structured history back so the next run starts from a denser map of what worked and what failed.

The loop didn't behave like textbook optimization. Round 1 scored highest; later rounds drifted. The full methodology, the $676 spend breakdown, and what I'm changing next are in the companion piece:

All strategies referenced are simulated backtest results. Past performance is not indicative of future results. Backtests do not account for slippage, commissions, or liquidity constraints. This article is not financial advice. Options trading involves substantial risk of loss and is not suitable for all investors.

No comments yet.